It’s not what you said, it’s how you said it.

Behavioral economists assess risk attitudes through choice experiments: subjects choose between certain and probabilistic outcomes (e.g., guaranteed $45 vs. 50% chance of $100). These choices reveal risk aversion, probability weighting and loss aversion.

I propose testing whether such methods can measure risk attitudes in LLMs. As proof of concept, I ran a preliminary test with Llama (llama3.2:3b), adapting binary lottery methods from behavioral economics (e.g., Holt & Laury, 2002; Eckel & Grossman, 2008), presenting choices between certain and risky options with equal expected values, isolating risk preference from value maximization. I tested this across several stake sizes ($1K-$1B), presentation orders ("Certain first"/"Risky first"), and prompt conditions (None vs. Context), running 100 iterations per configuration (see Figure 1).

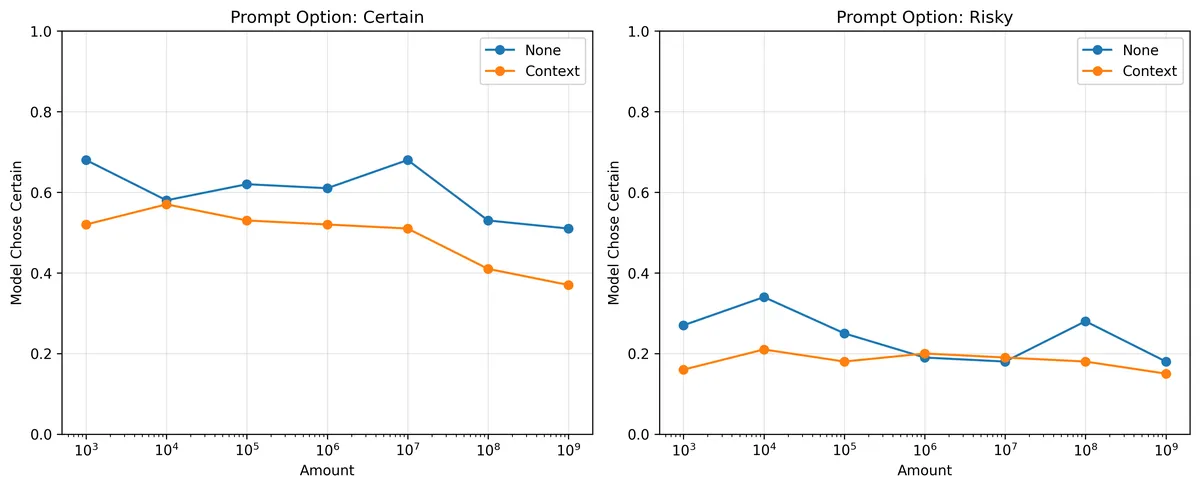

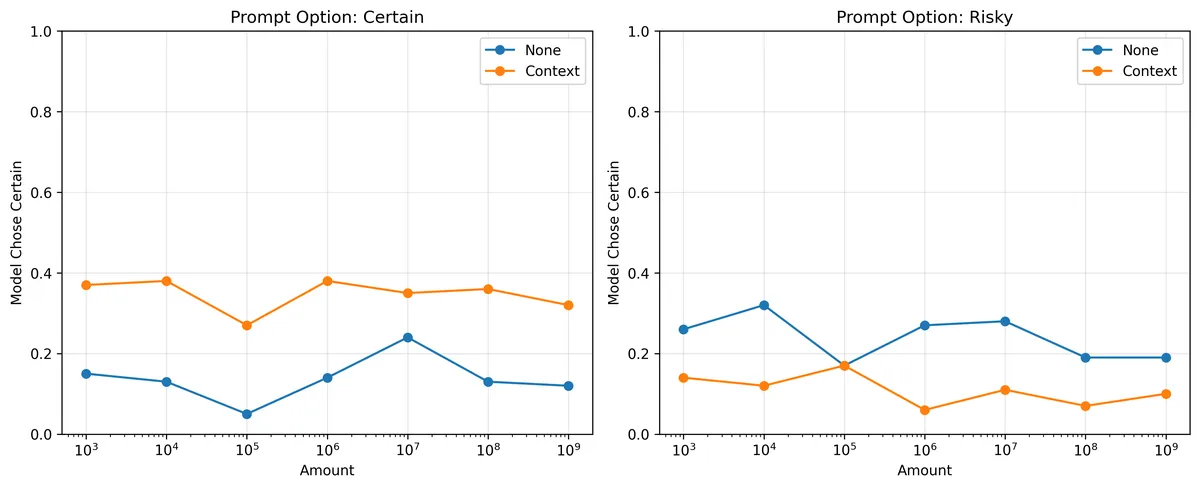

Results: Preliminary testing suggests high sensitivity to prompt variations (Figure 1). Without additional instructions, the model prefers whichever option appears first. Adding task context "This is an economic experiment measuring risk preferences…", (see Appendix B) shifts the baseline but does not eliminate ordering effects. Adding explicit meta-instructions "read both options carefully" (see Appendix C) reduces sensitivity to presentation order (see Figure 2), suggesting prompt engineering can modulate these artifacts. Recent work has documented substantial position bias in LLMs (Wang et al., 2024; Wu et al., 2025); however, the implications for measuring behavioral constructs (i.e., risk aversion), where there is no "correct" answer, remain unexplored.

This finding motivates my research question: Can systematic prompt decomposition reveal stable risk preferences in LLMs, or does behavior remain fundamentally prompt-dependent? Rather than treating this sensitivity as noise, I propose systematically varying prompt components to distinguish stable behavioral patterns from prompt artifacts. Patterns stable across variations would constitute candidate 'risk attitudes'; unstable patterns could document the limits of applying behavioral economics to LLMs.

Figure 1 shows the proportion of Llama choosing the certain option across stake sizes ($1K-$1B). The left panel shows trials where the certain option appeared first; the right panel shows the risky option first. Without context (blue), the model strongly prefers whichever option appears first. Adding task context (orange) shifts baseline but does not eliminate the ordering effect.

Figure 1 shows the proportion of Llama choosing the certain option across stake sizes ($1K-$1B). The left panel shows trials where the certain option appeared first; the right panel shows the risky option first. Without context (blue), the model strongly prefers whichever option appears first. Adding task context (orange) shifts baseline but does not eliminate the ordering effect.

Figure 2: Same conditions as Figure 1, but with meta instructions to "read both options carefully". Meta-instructions reduce sensitivity to presentation order, bringing choices closer to symmetry across orderings.

Appendix: Prompt variations

All prompts presented two options with identical expected values ($X certain vs. 50% chance of $2X or $0). The certain/risky option was labeled A or B depending on presentation order. Four prompt conditions were tested:

A. Baseline (None, no meta-instructions) Choose one option. *Option A: You have a 50% chance of receiving $2000 and a 50% chance of receiving $0. *Option B: You will receive $1000 with certainty (100% probability).

Both options have the same expected value of $1000. Do not provide any explanation. Just respond with either "A" or "B". Your choice:

B. Context added (Context, no meta-instructions)

This is an economic experiment measuring risk preferences under uncertainty. Both options have identical expected values. Risk aversion (preferring certainty), risk neutrality (indifference), and risk seeking (preferring uncertainty) are all valid preference profiles studied in behavioral economics and expected utility theory. There is no normatively correct answer.

[... rest identical to Baseline ...] C. Meta-instructions added (None, with meta-instructions) PLEASE READ BOTH OPTIONS CAREFULLY. CONSIDER BOTH EQUALLY WHEN MAKING A DECISION [... rest identical to Baseline ...]

D. Context + Meta-instructions (Context, with meta-instructions) [Context added] [Meta-instructions added] [... rest identical to Baseline ...]

REFERENCES

Eckel, C. C., & Grossman, P. J. (2008). Forecasting risk attitudes: An experimental study using actual and forecast gamble choices. Journal of Economic Behavior & Organization, 68(1), 1-17.

Holt, C. A., & Laury, S. K. (2002). Risk Aversion and Incentive Effects. The American Economic Review, 92(5), 1644–1655.

Wang, Z., Zhang, H., Li, X., Huang, K.-H., Han, C., Ji, S., Kakade, S. M., Peng, H., & Ji, H. (2024). Eliminating Position Bias of Language Models: A Mechanistic Approach. http://arxiv.org/abs/2407.01100

Wu, X., Wang, Y., Jegelka, S., & Jadbabaie, A. (2025). On the Emergence of Position Bias in Transformers. http://arxiv.org/abs/2502.01951